Open the command prompt as an administrator on windows machine

Try to connect to linux machine using ftp and try to copy a file

Here we are getting the message as "Not connected"

To resolve this we need to modify "vsftpd.conf" on linux machine as below:

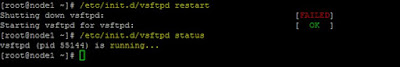

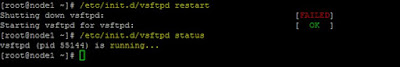

Now restart the ftp server as below:

Now again try to connect from windows machine

Here we tried to connect as root user but it got failed.

By default ftp server was not open to linux as root user so try with normal user.

Copy a file from windows to linux using "put" command in ftp prompt

Now check for the file in linux machine

Basic ftp commands:

1. ls

List the contents of remote directory

2. cd

Change the remote working directory

3. dir

List contents of remote directory

4. get

To receive file from the linux machine to windows machine

Check for the file on windows machine

5. lcd

Change the local working directory on windows machine

6. mget

Getting multiple files from linux machine

Checking files on windows machine

7. mput

Sending multiple files to linux machine

Checking files on linux machine

8. rename

Renaming a file name

Checking for the renamed file

9. bye

Terminating the ftp session

10. close

Terminating the ftp connection

11. help

Displays the local help information

Try to connect to linux machine using ftp and try to copy a file

Here we are getting the message as "Not connected"

To resolve this we need to modify "vsftpd.conf" on linux machine as below:

uncomment the following line below and save the file

local_enable=YES

write_enable=YES

anon_upload_enable=YES

Now restart the ftp server as below:

Now again try to connect from windows machine

Here we tried to connect as root user but it got failed.

By default ftp server was not open to linux as root user so try with normal user.

Copy a file from windows to linux using "put" command in ftp prompt

Now check for the file in linux machine

Basic ftp commands:

1. ls

List the contents of remote directory

2. cd

Change the remote working directory

3. dir

List contents of remote directory

4. get

To receive file from the linux machine to windows machine

Check for the file on windows machine

5. lcd

Change the local working directory on windows machine

6. mget

Getting multiple files from linux machine

Checking files on windows machine

7. mput

Sending multiple files to linux machine

Checking files on linux machine

8. rename

Renaming a file name

Checking for the renamed file

9. bye

Terminating the ftp session

10. close

Terminating the ftp connection

11. help

Displays the local help information